ImageGeneratorML

Generate Images on iPhone, iPad or your Mac with Machine Learning

TL;DR. This application is built on top of ml-stable-diffusion using Stable Diffusion model to generate images locally on your devices. iPhone Pro 12+ (iOS 16.2+), iPad (iPadOS 16.2+)/MacBook M1/M2 (13.1+) are supported.

Background

Every year Apple releases a more powerful device than the year before. And if you aren’t a gamer, most of the time it is hard to see what your new device can do.

Apple released at the end of November 2022 a library that allows you to use Machine Learning models to generate images based on text input. It is based on the Stable Diffusion models. You probably have heard about DALL-E 2, and maybe about Stable Diffusion. Both of these models allow you to generate images by using Machine Learning models from text input by the user. There are some differences between how DALL-E 2 and Stable Diffusion generate images, but the most important are that Stable Diffusion was released publicly, and DALL-E is available only via cloud services.

With the help of Apple’s library ml-stable-diffusion you can now really see what your new iPhone, iPad or Mac can do. By just writing a sentence of what you want to see, you can generate an image in less than a minute from the Stable Diffusion Machine Learning Model.

A photo of an astronaut riding a horse on mars

Building an application

About a week ago, just by accident, I was researching whether there are any Machine Learning (ML) models available that can generate simple images directly on iPhone, and by surprise discovered ml-stable-diffusion, which was released a day prior to my search.

I decided to give it a try with the idea of releasing a simple application, free of charge. I could not take credit for the work, where the main features were built by somebody else.

Unfortunately I have met some issues with making this application for the public:

- This library is available only for Apple Silicon architecture, but currently Apple does not allow publishing Mac applications in the App Store that don’t include Intel architecture as well. That means no native Mac application at this time. But you can install the iPad version on Mac, so that solved this problem.

- The model is pretty big, close to 3GB. The application will be pretty big in download size. For resources that big, Apple suggests using On-Demand Resources, but even they cannot be that big, unless you can host it on your own server, and traffic is expensive, so I just bundled it for now. Application size is about 2.5GB.

- It is still very unstable - there are so many variables that can finish the job successfully, but at the same time even crash your device, because the app will be terminated due to an out-of-memory exception. I have preconfigured it to make it work.

I have decided to give it a try and let the public try this library on their devices to generate any image they want based on the text they enter.

System requirements

The library requires at least iOS 16.2, iPadOS 16.2 and macOS 13.1. At the time of writing all of those OS versions were in Release Candidate state. So if you aren’t using beta, it’s highly possible that you don’t meet the minimum requirements. At the same time I was able to run this library on macOS 13.0.1 without any issues, but could not finish it with iOS 16.0 on my iPhone 14 Pro.

The library ml-stable-diffusion has minimum requirements of iPhone 12 and iOS 16.2, but this library and Machine Learning Model are very memory hungry. I have an iPhone 13 Mini as well, which has only 4GB of RAM, compared to the iPhone 14 Pro which has 6GB of RAM - I could not finish the image generation on the iPhone Mini. I would say that you have to have an iPhone Pro model, or iPad with M1/M2 chip, or MacBook with M1/M2 processor to be able to run this library.

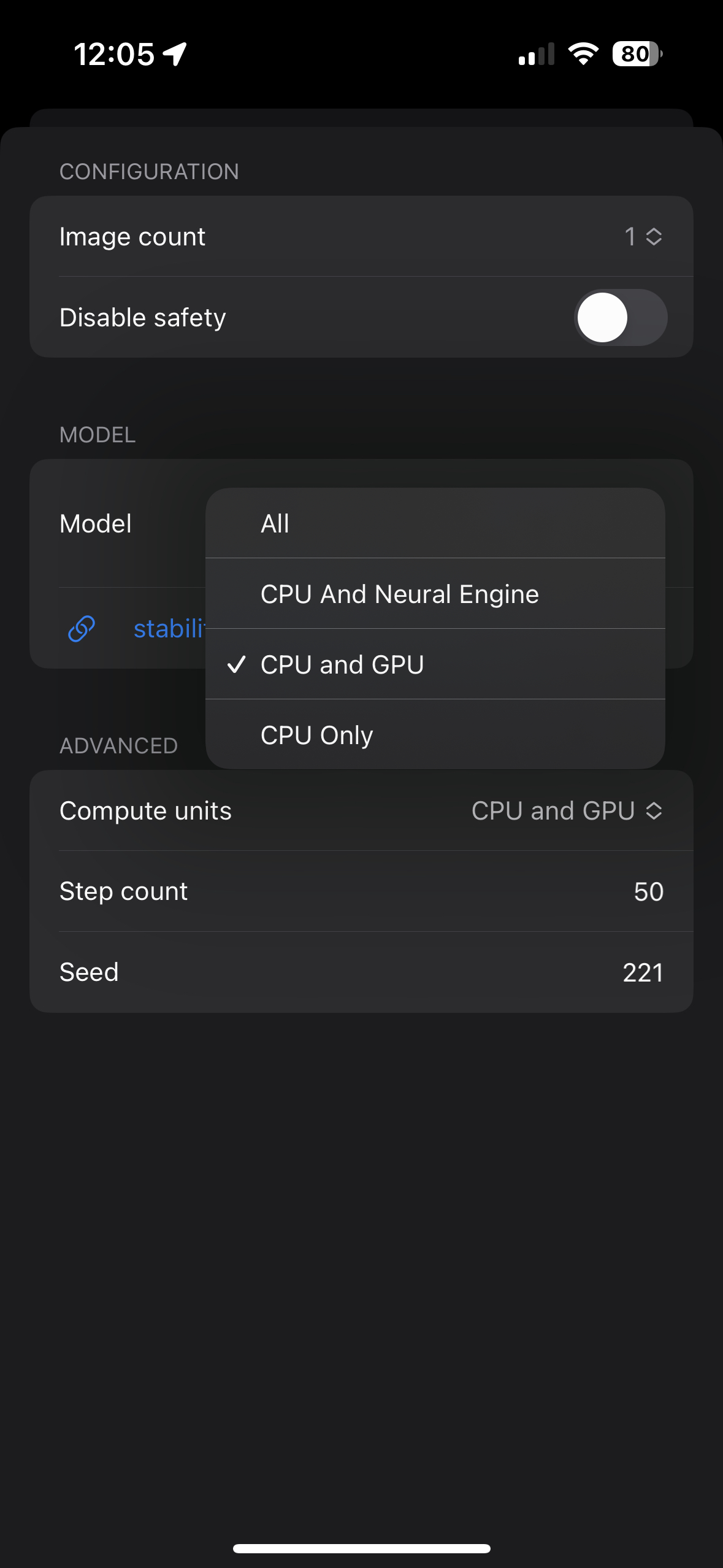

Configuration

You can change the Step Count to lower numbers to see if those results are satisfied. This model might be producing great results with even 20 steps.

Running on iPhone

By default, the application should set configuration to use CPU and GPU as compute units - it takes about a minute to finish the task. The first task will take longer, as loading resources can take some time. This is based on the test of running the application on iPhone 14 Pro.

I would assume that any iPhone with 6GB of RAM can handle it, but it needs to run iOS 16.2+ and be iPhone 12 Pro or later.

If it is crashing, you can try to free up the memory by restarting your phone.

Running on iPad

I was testing it on my iPad Pro M2 with 16GB of RAM (2TB version). You can use All compute resources, and it definitely

takes way less time to finish the task, compared to iPhone.

I would assume any iPad Pro M1/M2 can handle it. If your iPad has 8GB of memory, you might want to adjust the compute units and change them to CPU and GPU only.

Running on macOS

I have MBP 14 with M1 Max and 64GB. Performance feels similar to iPad.

My guess is that any M1/M2 powered Mac can handle this application. If you are using the 8GB version, you might want to try changing compute units from All to CPU and GPU, or CPU and Neural Engine.

Use cases

Pencil sketch of bear head with paw

I feel like the most common use cases are:

- Getting some ideas for making logos, drawings, designs. For example, the logo of this application was generated by this application.

- Learning to draw - you can use a prompt like

pencil sketch of bear head with pawto use it as a template for practicing drawing. For example, the image above was generated with the prompt Pencil sketch of bear head with paw. It didn’t get a paw, but you can still practice drawing with it. - For fun and creativity.

But make sure to read the license.

Features

- All the generated images are synced with iCloud.

- You can drag and drop images to Finder or other applications.

- You can export images.

- You can submit a lot of tasks that will be executed in sequence, and review them later (don’t do that on iPhone).

Download

The application is available with TestFlight - just accept the invitation, install it and use it.

License

Please review CreativeML Open RAIL++-M License. Especially Distribution and Redistribution.

Privacy Policy

We believe very strongly in our customers’ right to privacy. Our customer records are not for sale or trade, and we will not disclose our customer data to any third party except as may be required by law.

Any information that you provide to us in the course of interacting with our sales or technical support departments is held in strict confidence. This includes your contact information (including, but not limited to your email address and phone number), as well as any data that you supply to us in the course of a technical support interaction.

Support

Please email us any suggestions, ideas, questions or discovered bugs to support@loshadki.app